Robotics and autonomous systems represent an ever-evolving, high technological field of engineering covering a wide range of applications and theories, including computational architectures; human-robot interfaces; manipulation and locomotion; planning and navigation; sensing, and perception; machine-learning and adaptation; self-calibration and repair; and so on.

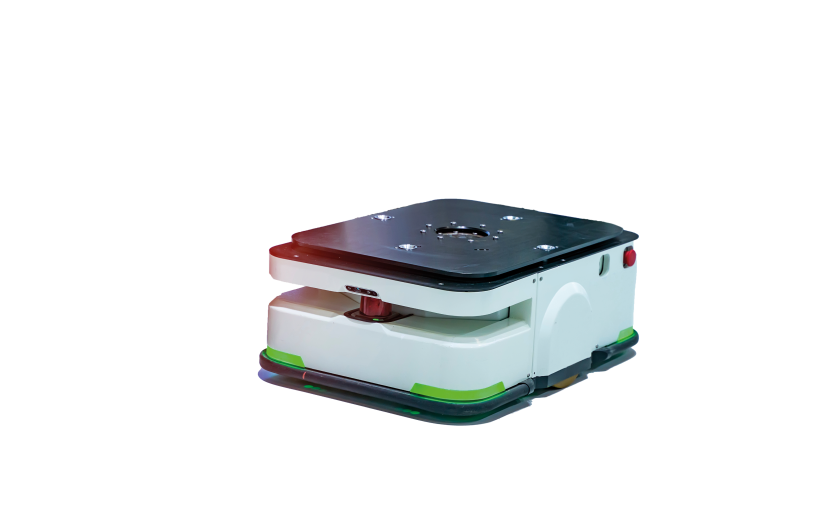

Autonomous mobile robots, sometimes abbreviated to AMRs but more commonly referred to by the simpler moniker of autonomous robots, are the culmination of this complex applicational intersection. But what is autonomy in the context of robotics, and what is the difference between an autonomous robot and a conventional robotics system?

What is an Autonomous Robot?

Robotics has been a mainstay of industrial settings for decades, but few of these could truly be classified as an autonomous system. Human beings have autonomy as we are capable of advanced decision-making. We can process information, draw conclusions, and carry out a host of executive functions with total independence. Autonomous robots are qualified by similar capabilities. If a robot can perceive its immediate surroundings and actuate movement/manipulation based on internal decision-making, then it can be described as truly autonomous.

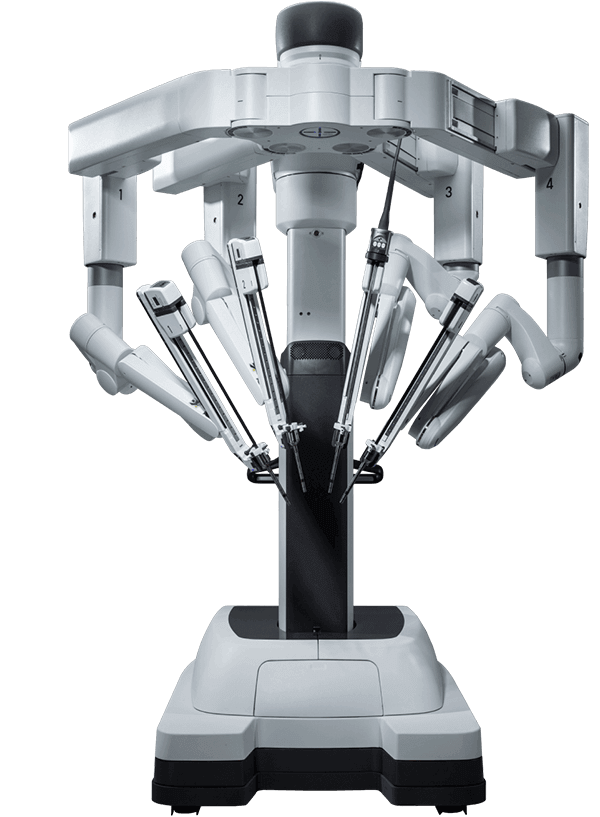

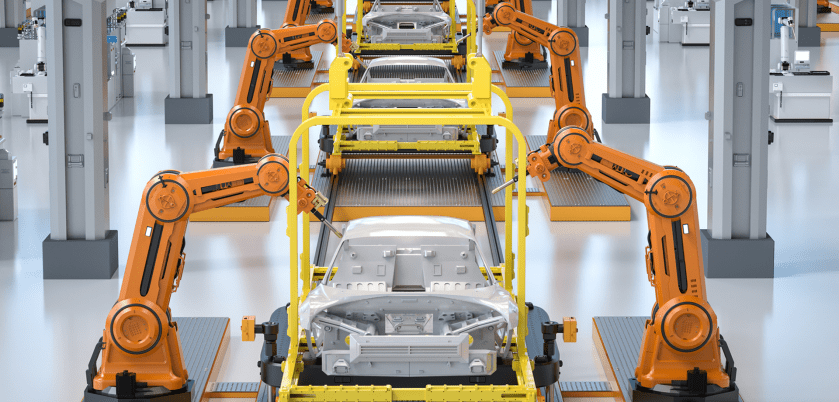

It is important to differentiate truly autonomous robots from pre-programmed machines and automated actuators which typically operate via remote human control/intervention or using complex guidance systems. Robot arms on an assembly line, for example, operate on complex yet pre-programmed functions, meaning they are only equipped to carry out the same repetitive motions and cannot react to dynamic circumstances.

Key Components of Autonomous Robots

In order to carry out truly autonomous action, robotic systems must be equipped with sensory components, information-processing capabilities, and some form of actuation.

Most autonomous robots are equipped with some form of optical sensing so the machine can “see” the surrounding environment. An entire suite of photonics solutions is used to enable next-generation robotics systems to perceive critical information such as distance and orientation which acts as a crucial input for subsequent decision-making and action. For example, the Mars Curiosity Rover is equipped with multiple integrated Mastcams used to capture full-color footage of the surrounding environment with a panoramic view.

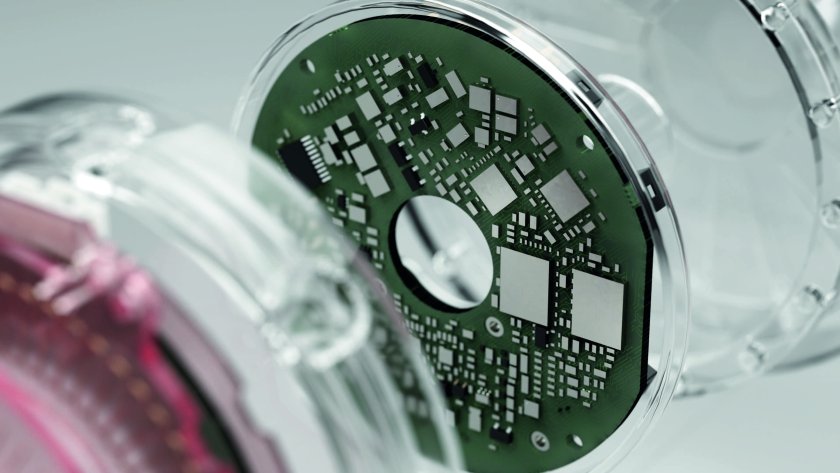

The computational aspect of autonomous robots is usually based around machine learning and artificial intelligence, using advanced algorithmic analysis to parse out data and make decisions in real-time. Such an embedded system can read visual data to determine the distance between the robot and an obstacle and to subsequently adjust course to navigate around it.

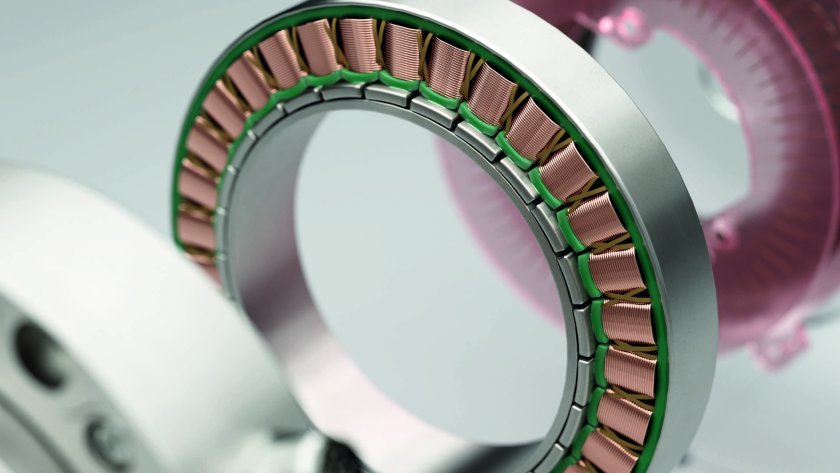

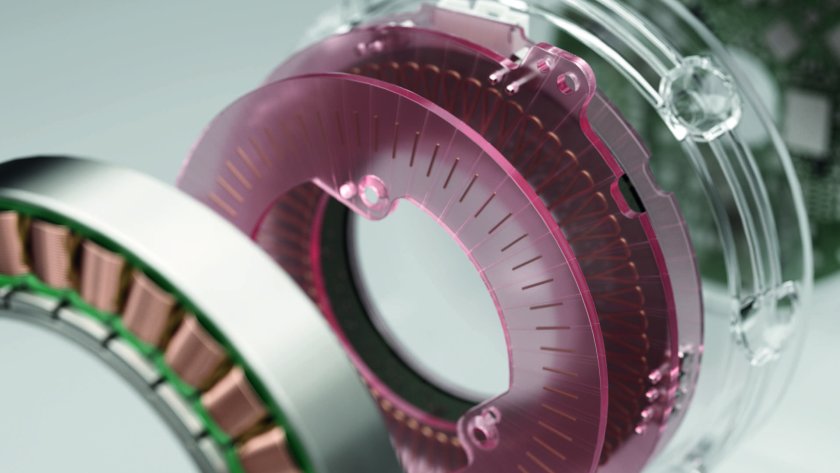

Although the sensory input and computational array collect and process data, motors are typically actuated to carry out the desired function. For example UVD robots are used to automate critical disinfection runs in clinical settings, using AMRs equipped with ultraviolet torches to sterilize surfaces. This requires careful motion tracking and compact, high-performance servos capable of actuating movement with optimal power efficiency to ensure operations can run uninterrupted for extended periods.

Interested in Autonomous Robotics?

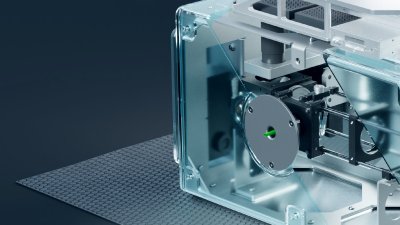

In robotics and autonomous systems, it is important to establish continuous communications between all three of these key components (sensor, processor, and actuator). At Celera Motion, we have extensive experience producing compact servo drives and systems compatible with Wi-Fi and Bluetooth modules for reporting system information. If you would like more information, contact a member of the team today.